Astronomy has always pushed the boundaries of what humans can observe. For centuries, scientists relied on the naked eye, then optical telescopes, then giant radio dishes to gather signals from the sky. Today, artificial intelligence has become the most powerful tool in the astrophysicist’s toolkit. It is actively changing how researchers study everything from volcanic moons to the farthest reaches of space.

The universe generates data on a scale that no human team can process alone. A single night of observation from a modern telescope can produce terabytes of raw information. AI systems process this information at speeds that would take entire research teams years to replicate. The outcome is a new era of discovery moving faster than any previous generation of scientists could have imagined.

This article covers five specific ways AI is advancing our understanding of the universe right now. Each example draws on real research programs and active missions. The science is happening today, not in some speculative future. Researchers are already reporting results that would have been impossible just a decade ago.

1. Generating High-Resolution Simulations of the Universe

Traditional cosmological simulations were often limited by computing costs. Scientists had to make trade-offs that left models too rough to capture fine-scale structure. The arrival of AI-driven methods has made it possible to run detailed, high-resolution models at a fraction of the previous cost. These models now show how dark matter shapes galaxy formation with a level of detail that was previously out of reach.

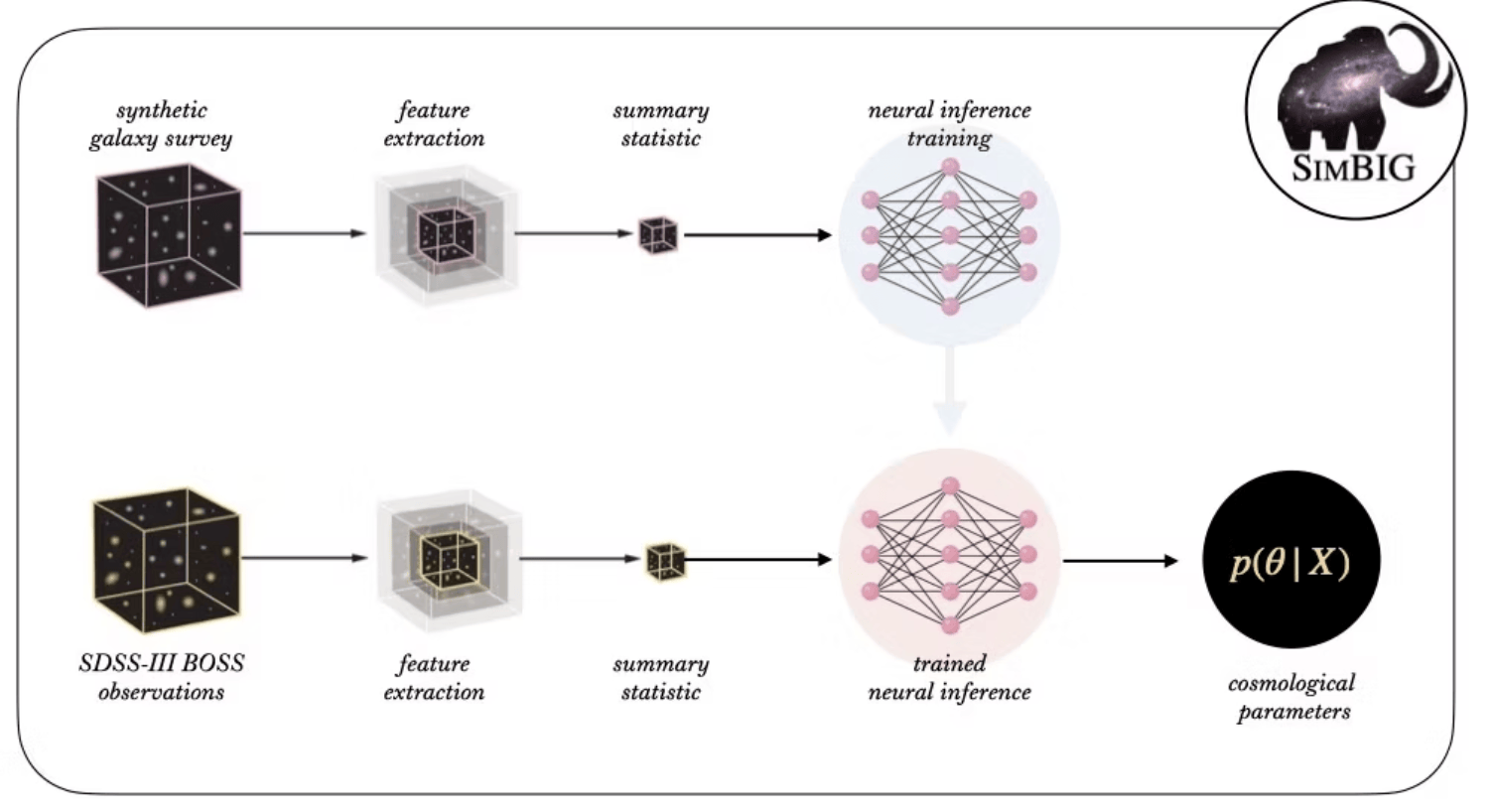

One key example is the SimBIG framework, which stands for Simulation-Based Inference of Galaxies. This system draws on advanced simulations to extract hidden information from the way galaxies cluster together. It has cut the uncertainty in measuring the “clumpiness” of matter in the universe by half. That single improvement is functionally equivalent to doubling the power of an existing telescope, with no new hardware required.

The ability to model the universe at this resolution carries broad scientific implications. Researchers can now test theories about dark matter more rigorously than before. They can also study how large-scale structures formed over billions of years of gravitational activity. AI makes it practical to run many versions of these simulations quickly and compare them systematically.

This kind of computational progress is especially important given the cost of space hardware. Building a new telescope can take decades of planning and billions of dollars in funding. AI allows scientists to extract far more value from the instruments they already operate. That is a practical and meaningful advance for a field that always needs to do more with limited resources.

2. Performing Digital Surgery to Restore Telescope Vision

Even the most advanced space telescopes carry hardware limitations. Electronic distortions, detector imperfections, and calibration drift can all degrade image quality over time. AI is now being used to correct these problems from the ground, without any physical repair mission. This approach saves enormous amounts of money and protects years of planned scientific operations.

The James Webb Space Telescope, commonly known as the JWST, is one clear example. Two PhD students developed an algorithm called AMIGO, which uses neural networks to fix a problem known as the “brighter-fatter effect.” This distortion occurs in the telescope’s infrared camera and blurs fine details across captured images. After applying AMIGO, the JWST produced the sharpest images yet of black hole jets and volcanic eruptions on Jupiter’s moon Io.

A second example involves the first-ever image of the M87* black hole, captured by the Event Horizon Telescope. Researchers applied an algorithm called PRIMO to re-analyze that original image. PRIMO examined 30,000 simulated black hole images to learn what real structures should look like. The refined result revealed a sharper view of the black hole, showing a central dark region that is larger and darker than the original image suggested.

These two cases demonstrate how AI extends the scientific life of expensive instruments. They also show that software development can be as impactful as physical hardware upgrades. A small team of researchers, including doctoral students, can produce corrections that improve results from billion-dollar observatories. That represents a meaningful shift in how cutting-edge science can be done.

3. Powering Real-Time Discovery Machines

Some of the most significant developments in astronomy involve detecting events that happen quickly and unpredictably. Exploding stars, fast-moving asteroids, and sudden flares of energy appear and fade within hours or days. Catching these events requires systems that scan the sky continuously and react without delay. AI makes this kind of real-time detection operationally feasible.

The Vera C. Rubin Observatory, currently under construction in Chile, is one of the most ambitious examples of this approach. It is designed to scan the entire Southern Hemisphere sky every few nights. Each night, the observatory is expected to generate approximately seven million alerts about detected events. No human team could sort through that volume of data in time to act on it meaningfully.

AI brokers serve as the solution to that challenge. These are machine-learning systems that filter, classify, and prioritize alerts automatically. They can distinguish between a distant supernova and a moving asteroid within 60 seconds of image capture. Astronomers then receive targeted notifications and can schedule follow-up observations before an event fades or moves out of view.

This approach turns a telescope into something closer to an automated discovery engine. Scientists set scientific priorities in advance, and the AI handles the sorting and routing of alerts. The result is that rare and short-lived events are far less likely to be missed entirely. Research teams around the world gain access to timely, well-classified data without being overwhelmed by raw data volume.

The Rubin Observatory is expected to survey the sky for ten years after it begins operations. Over that period, it will build the deepest and widest catalog of the known universe ever assembled. AI is what makes that scientific return possible at the speed required. Without it, the observatory’s full potential would remain largely out of reach.

4. Tuning Into the Bass of Gravitational Wave Signals

Gravitational waves are ripples in spacetime produced by some of the most violent events in existence. Detecting them requires instruments so sensitive that even tiny mechanical vibrations can drown out real signals. Until recently, this noise problem placed a ceiling on how many event detectors like LIGO could observe. AI is now helping scientists break through that ceiling.

A new method called Deep Loop Shaping uses reinforcement learning to manage the mirrors inside the LIGO observatory. These mirrors must remain almost perfectly still to detect the faint stretching of space caused by distant, violent collisions in space. The AI learns to keep them stable by continuously adjusting control systems in real time. This process reduces control noise by a factor of 30 to 100, depending on observational conditions.

That noise reduction has a direct scientific payoff. With cleaner signals, LIGO can detect events that were previously too faint to identify. Estimates suggest that this improvement could allow scientists to observe hundreds of additional black hole and neutron star collisions each year. Each new detected collision adds a data point to humanity’s understanding of how these extreme objects form and behave.

One area of particular scientific interest involves intermediate-mass black holes. These objects sit between the smaller stellar-mass black holes and the supermassive ones found at the centers of galaxies. Scientists have long believed they exist, and finding them in meaningful numbers would help explain how galaxies grow over time. Better gravitational wave detection may finally reveal them at a rate that allows real statistical analysis.

The use of reinforcement learning here is especially notable. The AI does not follow a fixed set of rules; it learns from experience how to control the mirrors more effectively. That adaptability allows it to respond to changing conditions in a way that traditional control systems cannot. It is a practical demonstration of how machine learning methods can solve problems in precision physics instrumentation.

5. Enabling Autonomous Explorers on Mars

Space exploration has always faced a fundamental challenge rooted in the speed of light. Signals between Earth and Mars can take anywhere from 3 to 22 minutes to travel one way. This means ground controllers cannot react in real time to what a rover encounters on the surface. For years, that limitation forced mission teams to plan routes days, based on limited orbital imagery.

In December 2025, NASA’s Perseverance rover completed its first series of drives planned entirely by generative AI. The system used vision-language models to analyze orbital images and surface terrain data. From that analysis, the AI mapped a continuous path while identifying hazards such as exposed bedrock and unstable sand ripples. This marked the first time an AI system acted as a full route planner on another planet.

The practical impact of this development is significant for mission science. Rovers can now cover more ground per day because they no longer wait for human-designed route plans to be uplinked from Earth. They can also respond to terrain features that orbital imagery does not fully capture at fine resolution. This increases the chance of finding scientifically valuable sites before a mission’s operational window closes.

Autonomous navigation also opens the door to bolder mission designs going forward. Future rovers and landers could be sent to regions that are too geologically complex for human-guided navigation to handle at a distance. AI pathfinding makes those targets reachable for science teams. As models improve, the gap between human decision-making and machine planning will continue to narrow in meaningful ways.

The Perseverance milestone also signals something broader about the state of the technology. Generative AI has progressed to the point where it can interpret visual environments and make spatial decisions reliably enough for operational use. The planetary science community is taking note of what this enables for future missions to Mars, Europa, and beyond. Autonomous surface exploration is no longer a distant concept; it is already underway.

Why This Matters for the Future of Science

Each of the five areas covered in this article represents a real shift in how scientific work operates. AI is not a distant promise for astronomy and space exploration. It is an active participant in current research, running alongside telescopes, rovers, and observatories right now. Scientists at every career stage are using these tools to do work that would otherwise be impossible.

The scale of what AI can process is one key factor in its growing role. Another is the speed at which it can act across millions of data points simultaneously. A third is the ability to learn from large datasets in ways that continue to improve over time. Together, these qualities make AI a genuine multiplier for scientific output across many research domains.

The field is also becoming more accessible because of AI-driven methods. Smaller research teams can now tackle problems that once required massive centralized computing infrastructure. Graduate students can build algorithms that correct flaws in space hardware worth billions. Real-time event detection means that scientists anywhere in the world can participate in live discovery on equal footing.

There is still much that AI cannot do on its own. It cannot replace the creative thinking that drives new hypotheses about how the universe works. It cannot make sense of data it has never been trained to interpret. It cannot ask questions that no one has yet thought to ask. Those tasks remain the domain of human researchers, and they always will be.

What AI does is remove bottlenecks that have slowed science for decades. It processes the data that human teams cannot. It corrects the errors that hardware cannot fix from orbit. It detects the events that would otherwise disappear before anyone notices them. And it traverses the terrain that human operators cannot guide from millions of miles away.

Conclusion

AI is actively reshaping how scientists study the universe across five major areas of research and exploration. High-resolution simulations are giving researchers a clearer picture of dark matter and galaxy formation without requiring new hardware. Software-based corrections are restoring and improving the vision of space telescopes through algorithms built by small teams. Real-time classification systems are making it possible to catch rare and short-lived astronomical events as they unfold across the sky.

Reinforcement learning is reducing noise in gravitational wave detectors, opening a wider observational window onto black hole and neutron star collisions. And generative AI is guiding rovers across Martian terrain with a level of independence that was not possible before December 2025. Taken together, these advances represent a meaningful acceleration in the pace of scientific discovery, driven by tools that continue to improve with each passing year of research and development.